- Blog

- Stronghold 2 free download full version

- Bias amp 2 elite

- Minions butt plug

- Normal cervical spine x ray

- Yt banner template 2048 x 1152

- Powerpoint slide designs with animation

- Starfall calendar meals on wheels

- Adobe after effects cs6 portable

- Indian porn full hd free sites list

- Inflamed lymph nodes on back of neck

- Euphrates river will drying up bible prophecy

- Acapela group voice synthesis text to speech

- Un blocked games sports head basketball

- Google sheet tracking household budget template

- Trainer bioshock 2 remastered

- Special effects for movie maker free download

- Ptsd bullet point dsm 5 criteria

- Final destination 3 wiki

- Margaret keane paintings margaret keane self portrait

- Chemistry periodic table group names

- Solve a quadratic equation by factoring calculator

- Birthday party planner nyc

- Ohio historic motorcycle plates

- Phial of galadriel noble collection

- Cover letter examples for internship

- Kmspico office 2016 activator

- Structural functionalism vs conflict theory

- Find stud in lath and plaster wall

- Doppler radar united states

- Party planner partnership business contract template free

- Api 1104 20th edition free download

- Excel spreadsheet daily expense and income

- Poop huffer copy paste text art

- Concrete parking block near me

- Solve equation using quadratic formula

- X lite softphone destinatin hunt group

- Farming simulator 19 horse car mods

- Android app like goodnotes 5

- Backgrounds free worship backgrounds propresenter offering

- Spectrum tv channel lineup list

- Hacking client for minecraft 1-8-9

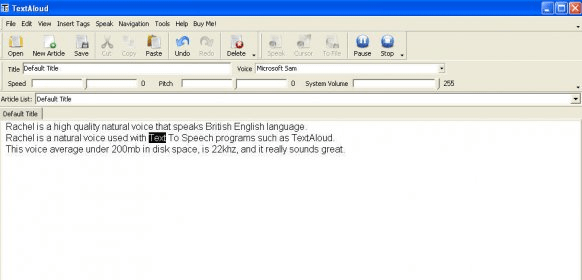

Acapela voices enrich AAC solutions and communicators turning any written words into spoken messages.ĪTMs and Ticketing systems are made more ergonomic and user friendly thanks to speech solutions which provide end users with on the fly information, vocal feedback about ongoing navigation and help with transactions through vocal support. Robots and smart toys : voice is essential to turn companions and humanoids conversational.Īn A to Z of all the application fields that benefit from speechĪAC solutions (Augmentative and Alternative Communication) allow users with impairments or restrictions related to the production or comprehension of spoken or written language to live and communicate with their environments with more autonomy.Access information: SMS and e-mail reading, voice servers and call centres for services 24/7, passenger information systems, public address.Learn and play: language learning and e-learning, edutainment, talking characters proof reading and productivity tools, talking characters, online talking assistance, talking browsers and more.Public Transport: easy integration in the information systems to deliver real time, personalized and accurate information to the passengers.Autonomous vehicles: voice is the more relevant interface to guarantee real time passenger information for a safe and pleasant journey.Drive safely: voice guidance, traffic information, e-mail reading, onboard alerts and diagnostic systems, on-line reservations, Internet access and more, keeping hands o the wheel and eyes on the road.Move: listen to your favourite podcast or audio book on the go, keep track of the news, traffic status, pedestrian navigation, sports results or your e-mails.

Acapela Group is actively working on Deep Neural Networks. This machine learning revolution is holding its promises as it enters the Text to Speech arena. Neural Networks have revolutionized artificial vision and automatic speech recognition. The patterns they recognize are numerical, contained in vectors, into which all real-world data, be it images, sound, text or time series, must be translated. They interpret sensory data through a kind of machine perception, labelling or clustering raw input. Neural networks are a set of algorithms, modelled loosely after the human brain, that are designed to recognize patterns. We use them in Text-to-Speech to learn the relationship between a set of input texts and their acoustic realizations by different speakers. DNNs can model complex non-linear relationships. A deep neural network (DNN) is an artificial neural network (ANN) with multiple hidden layers between the input and output layers.